05

Nov 10

16:33

Tatsumi Ryusui and Starcraft II and emacs

Over the last year in Berlin I worked on five collaborative music/performance/art projects that I’ve been meaning to post.

In the summer as a part of 48 Stunden Neukölln I did a collaboration with Tatsumi Ryusui (myspace).

Normally Tatsumi does experimental electronics improvisation and I do computer music or sonification stuff, but we were asked to play something a bit more poppy.

Tatsumi keeps everything real and is always exhibiting the utmost level of radness so I couldn’t pass this opportunity up. (After all, computer musicians secretly or publicly don’t like computer music and are always longing to find some pop outlet side-project to get some console for their self-imposed complexes.) Just kidding. I actually heard someone make this accusation for the general case. I must be still upset to post it here.

Tatsumi in white cow, me in aloha attire (it was friday):

Untitled from Tatsumi Ryusui on Vimeo.

Tatsumi played guitar and sung kayoukyoku, which is kind of between japanese folk and pop.

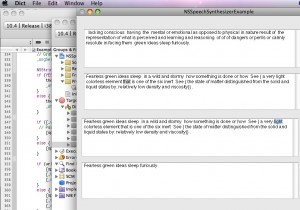

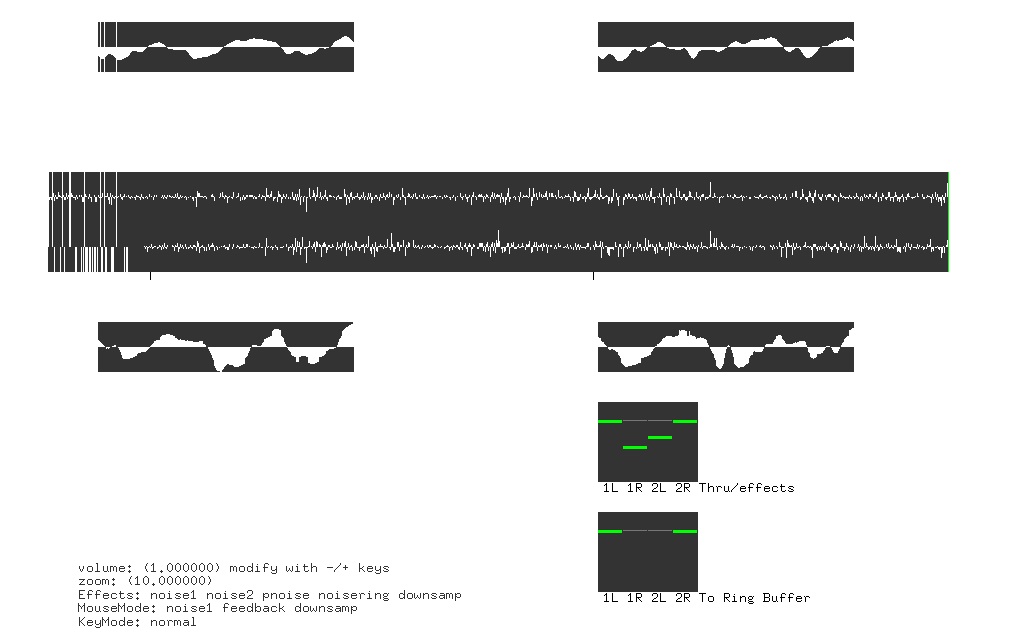

I made a effects processing program that has the interface inspired by emacs and Starcraft II.

You shouldn’t be freaking out. Starcraft II is a totally legit model for user interface. In general games are to interface as porn was to internet (although I can’t vouch for the correct SAT answer). As a competitive game it requires players to command with accuracy and dexterity while processing a huge amount of input. it is perhaps the most demanding in terms of user actions per minute (clicks, keystrokes). And it is essentially a performance environment, which is why some of the most popular channels are about watching shoutcasts of Starcraft II like Day9, HuskyStarcraft, and HDStarcraft. And not to mention it has a great community that amazingly includes genuinely cool genuinely interested genuinely cute girls like Press Heart To Continue. Yes, my love for starcraft is probably subject to second-hand embarassment. I’m not ashamed, so please learn to deal with it yourself or get in.

In using (text) programming as a medium for composition I often feel the need to be able to incorporate envelopes to control parameters such as volume/etc. This kind of thing the mouse is great for, and we’re pretty good at using it. It is of course imperfect for ‘drawing’ and I suck at ‘drawing’ and drawing to begin with (just how MouseX.kr and MouseY.kr in supercollider often brings snickers), but if processed correctly has many advantages for providing this need. No one’s saying you have to do a direct motion to parameter mapping. Also, we are all super fucking good at using the mouse now. If you have practiced an instrument and somehow got decent at it, you know how much time it takes. Chances are you spent a comparable amount of time using a mouse. Yet it’s somehow odd to think of it as practice.

Emacs is also great for its key input to do what you want as fast as possible. In general for commands you don’t touch the mouse and just press a combo of keys to execute commands.

The program I made was essentially a large effects box that allowed effect like delay/distortion/phaser/downsampling, mixing, zooming in on buffers, and quick parameter to mouse mapping based on keys/mouse position.

It looked like this:

It was done with OpenFrameworks, a minimal cross-platform C++ framework which I’ve been using in some Institute for Algorhythmics projects.

We’re going to play again on November 14 at Schlessiches Tor. I’ll post an update and info about other projects soon.