This conference, organized by the Asia Computer Music Project, which has the aim to bring the communities of computer music from Asian countries, was held on Dec 16-18, 2010, making this post exactly a month tardy.

Pipa performer En-Ju Lin (Taiwan) and Biwa performer Kumiko Shuto (Japan) end the concert with their related instruments. Photo: Michael Cohen

The conference was held by Tokyo Denki University, organized by Naotoshi Osaka, under whom I studied in 2006. I visited Osaka-sensei last year, when he told me about the situation that led him to start the Asian Computer Music Project. There is the phenomenon in Asian academics where there is a culture for domestic (as opposed to international) communities within a field, but when it comes to international communities, the Asian countries tend to not be involved with their Asian neighbors, and look directly towards western nations to be involved with. I can only speak with limited knowledge within my limited academic experience, but even within computer science, the ‘prestigeous’ organizations like IEEE, ACM, and journals like Nature tend to be organized in the West. Looking at the case for computer music, if we look within the International Computer Music Conference, more than 90% of the conferences (since 1974) have been in Europe or the U.S. There is no problem or unfairness in this fact itself, but if as a result it causes Asian countries to ignore their neighbors who have the same interests, this is indeed a disappointing and sub-optimal use of geography when these countries could thrive off each other. The Japanese case is interesting, due to it’s island nature and politics of isolation. Osaka-sensei is always trying very hard to bring people of like minds together, being a believer of community, so it seems like a natural choice for him to do this. I personally am very interested to see how this project works out.

The conference in December was actually the third event organized by the ACMP. The earlier ones were in Geongjyu and Daegu, Korea. Seongah Shin (Keimyung University) organized and hosted the previous meetings at her institution, and was also present at this one, giving a performance for experimental film and music.

The number of attendees for the lectures was small, as you might expect for a new organization, which gave the conference an excitement that is perhaps appropriate for its potential.

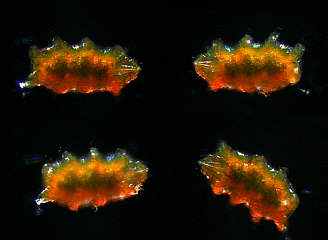

The paper topics covered (pdf proceedings here) ranged from Ligeti etude analysis (which gave some interesting insights into Ligeti’s thoughts on the limitations about how much of interesting visual concepts can be transferred to music by former Ligeti student Mari Takano (Toho-Gakuen Arts Tanki University, Bunkyo University) to User Experience regarding the expert user’s problems in comptuer music programming such as SuperCollider, given by Hiroki Nishino (National University of Singapore). There were many other talks I had interest in. I was very surprised and intrigued to have seen Koji Kawasaki asking questions from the audience, author of a well researched bible-sized text on Japanese electronic music (日本の電子音楽). Richard Dudas (Hanyang University), composer and former Max 4 programmer (yes, implementation side), gave a piece and presentation which used a very nice technique to manipulate timbre via filling and removing the gaps of harmonics and playing with the odd/evenness of the resultant spectrum, which does a very convincing range of saxophone to clarinet. There was also an awesome talk and demonstration on controlling music via brain wave sensors by Takayuki Hamano that managed to do the brain sensors without the icky gel stuff. Hiroko Terasawa is also working on sonification of videos of my second-favorite microorganism after the tartigrade, which would be c.elegans, (I spent a lot of time debugging code for multiple sequence alignment for this critter). I once tried to do sonification of video but it was too hard for me to get a perceptual resolution that is anywhere near the visual one so I gave up. Here’s hoping she continues to work with sonification.

The night concerts were in a large hall and were well attended. As you can see from the picture there was a Taiwanese piece by composer Chih-Fang Huang (Yuan Ze University) for tape, performed by En-Ju Lin (Heidelberg University) on Pipa. The first night featured the energetic pieces of younger Japanese composers such as Azumi Yokomizo and Yuka Nakamura who are students of Yuriko Kojima (Shobi University) and Shintaro Imai (Kunitachi College of Music) respectively. Imai also had a playful video piece doing what hi-def does well, focusing on the micro level. Also I was happy and surprised to see Takayuki Rai, (Lancaster Institute for the Contemporary Arts), and whose music I am a fan of was also there and I got to enjoy his piece and shake his hand.

When I am moving cities I find myself focusing more on how communities function. Since I’m planning to go to Japan soon and wish to maintain my academic relationships I hope that I get to see this project evolve. That’s all for now.